In the rapidly evolving landscape of multi-agent architectures (such as those built with LangGraph or Microsoft’s AutoGen), developers quickly hit a critical bottleneck: state management. As agents coordinate, hand off tasks, and build reasoning graphs, the memory underpinning their execution dictates whether the system scales gracefully or collapses under latency and race conditions.

Today, two primary architectural patterns dominate production deployments: high-speed, transient in-memory state using Redis, and durable, globally-distributed persistence using Azure Cosmos DB. In this technical deep dive, we’ll explore the trade-offs, configuration strategies, and production use cases for both.

The Architectural Challenge: Why State Matters in Agentic Workflows

Unlike traditional microservices where requests are largely stateless, multi-agent systems are fundamentally stateful. Agents pass massive JSON payloads containing conversation histories, vector embeddings, retrieved documents, and execution traces. If an agent node crashes mid-thought, the orchestrator needs to recover the exact step, context, and reasoning chain.

If your state store is too slow, the latency compounds across every agent hop, leading to poor user experiences. If your state store lacks durability, you risk losing critical execution history, making debugging hallucinations impossible.

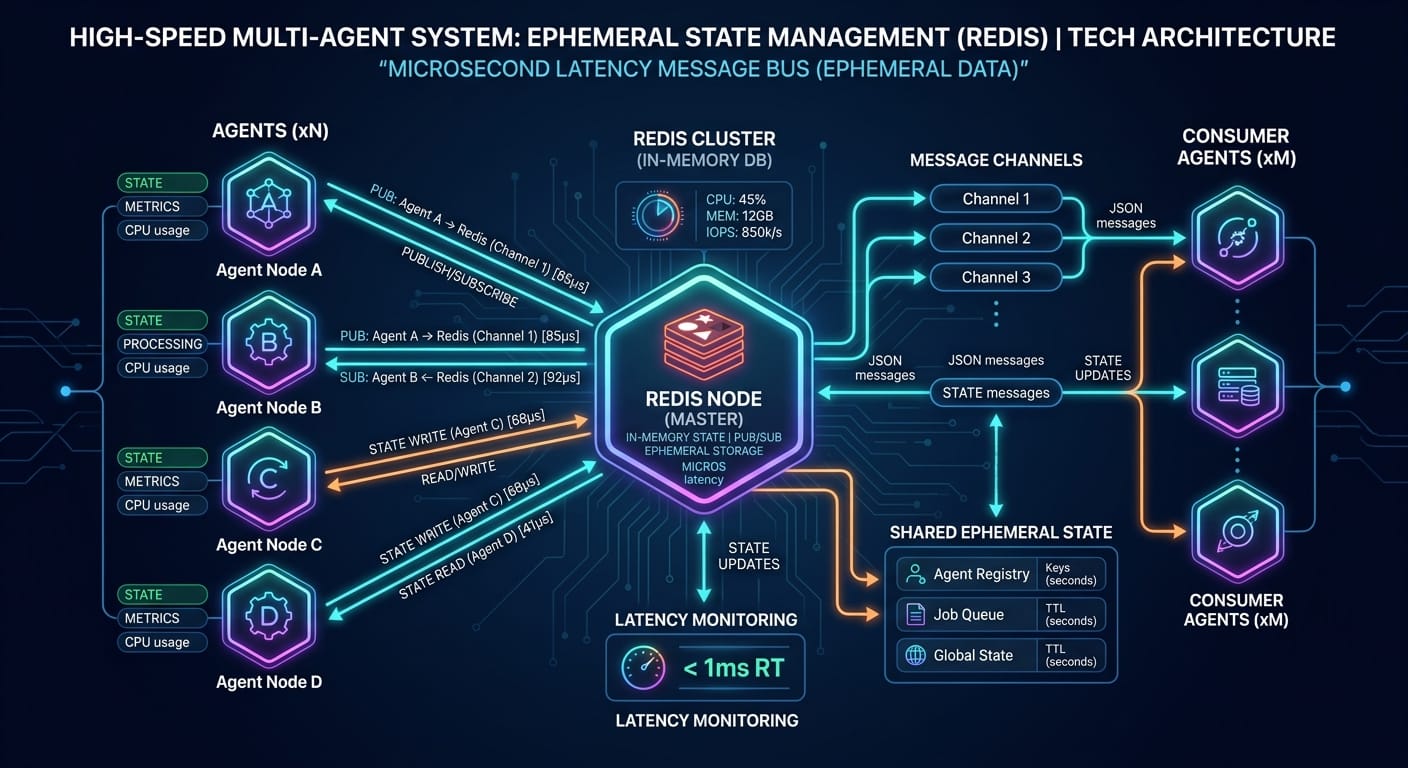

Redis: The Need for Speed and Ephemeral Graphs

Redis is the de facto standard for systems requiring sub-millisecond latency. In a LangGraph setup, you can use a Redis checkpointer to persist the conversational graph between node executions. Because multi-agent orchestration frameworks often require constant reads and writes of shared state, Redis ensures your agents do not stall waiting for database locks.

Implementation: LangGraph Redis Checkpointer

Here is how you can easily configure Redis to manage state using Python:

import redis

from langgraph.checkpoint.redis import RedisSaver

# Connect to the Redis cluster

redis_client = redis.Redis(host='localhost', port=6379, db=0)

# Initialize the checkpointer

checkpointer = RedisSaver(redis_client)

# Pass the checkpointer to your multi-agent graph

app = workflow.compile(checkpointer=checkpointer)Pros & Cons of Redis for AI Agents:

- ✅ Sub-millisecond Latency: Perfect for high-frequency trading bots or real-time voice agents where even a 50ms delay ruins the illusion of intelligence.

- ✅ Simplicity & Garbage Collection: Short-lived agent sessions disappear when the TTL expires, automatically managing garbage collection of massive LLM context windows.

- ❌ Durability Overhead: Configuring Enterprise-grade, multi-region high availability introduces significant operational overhead. It is not fundamentally designed to be an auditable ledger.

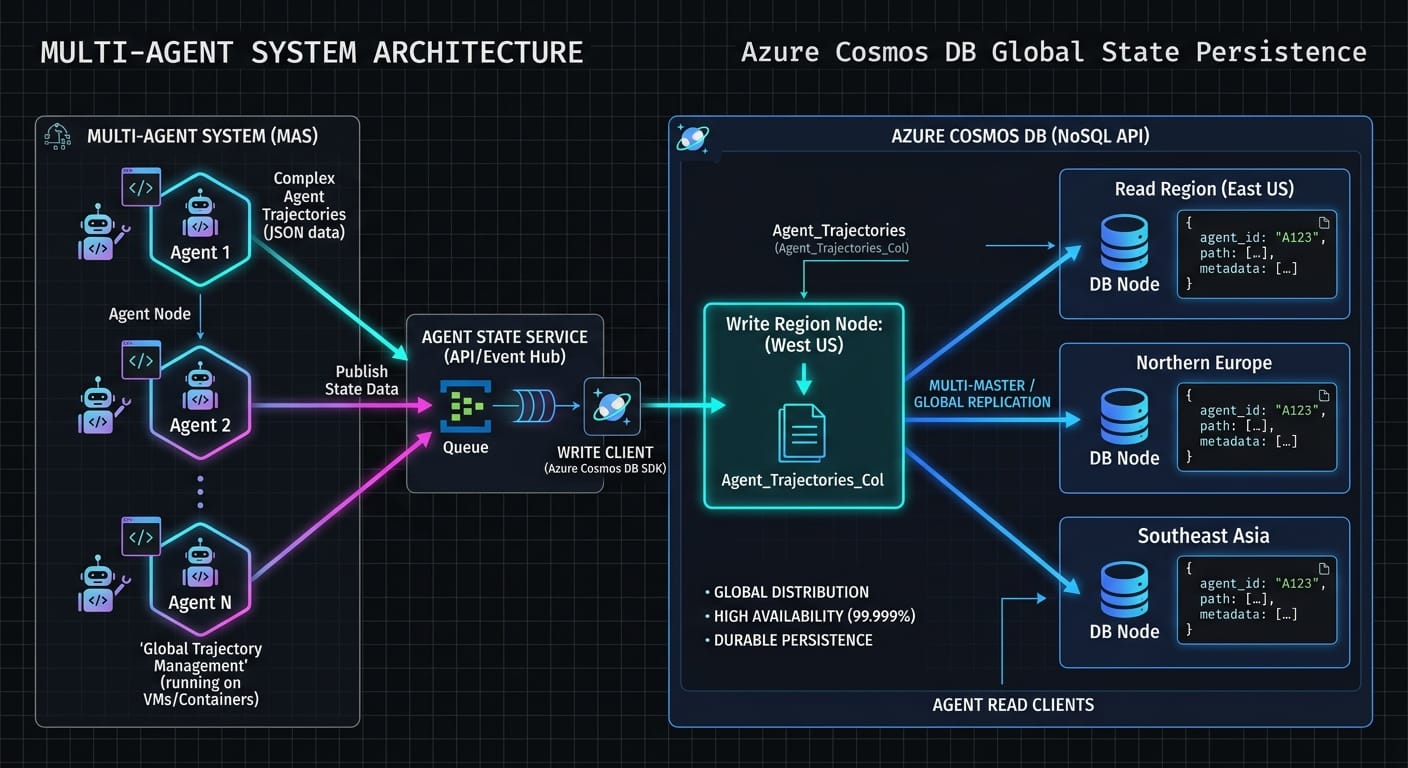

Azure Cosmos DB: Turnkey Durability and Global Distribution

For enterprise developers building robust, auditable AI systems, silent failures and data loss are unacceptable. Azure Cosmos DB provides a compelling alternative. Cosmos DB is a fully managed, globally distributed, multi-model database service that guarantees durable storage of agentic state, meaning that if your orchestration node crashes mid-workflow, the entire state graph can be accurately recovered.

Implementation: Cosmos DB State Persistence

Integrating Cosmos DB allows you to durably log agent steps via the Python SDK:

from azure.cosmos import CosmosClient

url = "https://your-cosmos-endpoint.documents.azure.com:443/"

key = "your-primary-key"

client = CosmosClient(url, credential=key)

database_name = 'AgentWorkflows'

container_name = 'StateGraphs'

# Access the container

database = client.get_database_client(database_name)

container = database.get_container_client(container_name)

# Upsert the complex JSON agent state

def persist_agent_state(session_id, state_payload):

container.upsert_item({

'id': session_id,

'state': state_payload,

'timestamp': datetime.utcnow().isoformat()

})Pros & Cons of Cosmos DB for AI Agents:

- ✅ Guaranteed Durability & Indexing: Every state update is automatically indexed. You can query your entire agent history using SQL to find specific decision points (invaluable for RLHF).

- ✅ Global Distribution: Syncs the state graph seamlessly across global regions with single-digit millisecond latency at the 99th percentile.

- ❌ Latency Constraints: While extremely fast (single-digit milliseconds), it cannot beat an in-memory Redis cache (sub-millisecond).

- ❌ Cost Management: If agents perform thousands of micro-state updates per second, you must carefully batch operations to avoid Request Unit (RU) throttling.

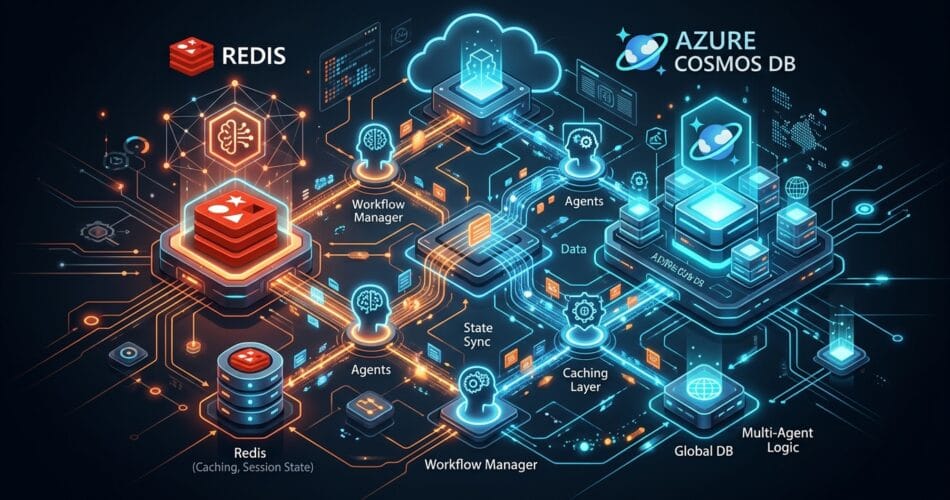

The Hybrid Approach: The Production Standard

In sophisticated deployments, you don’t choose between them—you use both. A common enterprise pattern is:

- Redis (The Hot Path): Short-term, high-frequency state transitions between agents during an active session are stored in Redis.

- Cosmos DB (The Cold Path): Once a session completes or an agent reaches a critical milestone, the final state and reasoning trajectory are asynchronously flushed to Cosmos DB for long-term storage, analytics, and compliance.

Related Reading: For a deeper dive into how these state managers fit into larger orchestrators, be sure to read our complete guide on Orchestrating AI Agents: LangGraph vs Azure AI Agents.