As large language models (LLMs) and autonomous AI agents become more sophisticated in 2026, the real bottleneck for enterprise AI isn”t reasoning-it”s memory. If your AI agent cannot efficiently store, retrieve, and contextualize massive amounts of proprietary data, it will hallucinate or fail at complex tasks. This is where vector databases come in.

Unlike traditional relational databases that search for exact keyword matches, vector databases search for semantic meaning. In this guide, we”ll explore why vector databases are the backbone of Retrieval-Augmented Generation (RAG) and compare the top options available for developers.

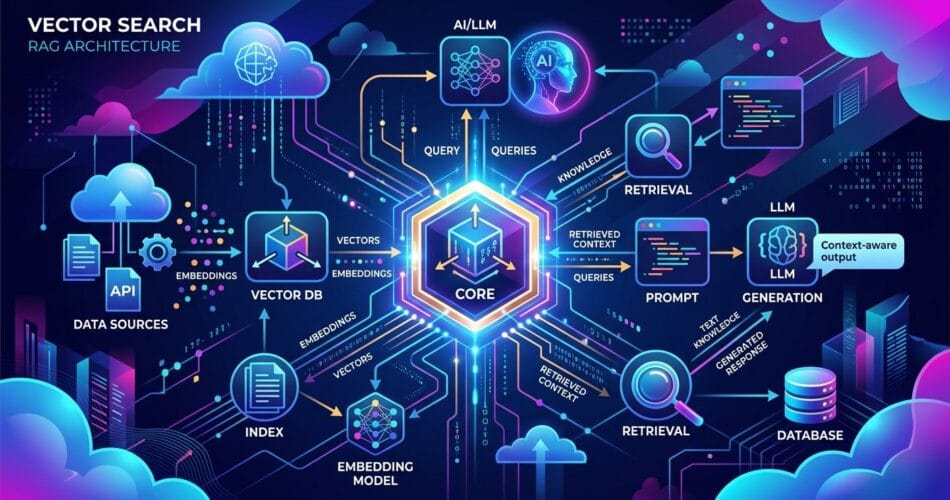

How Vector Databases Work

When you feed text (like a PDF document) into an embedding model (like OpenAI”s text-embedding-3-small), the model converts that text into a high-dimensional array of numbers-a vector. This vector represents the semantic meaning of the text.

A vector database stores these arrays. When a user asks a question, the agent converts the question into a vector and queries the database for the “nearest neighbors” in that high-dimensional space. The results are semantically related to the question, even if they don”t share the exact keywords.

Top Vector Databases in 2026

The landscape has matured significantly. Here are the leading options depending on your architecture:

1. Pinecone: The Developer Favorite

Pinecone remains one of the most popular fully managed vector databases. It is incredibly easy to set up and integrates flawlessly with frameworks like LangChain and LlamaIndex.

- Pros: Fully managed (serverless), ultra-fast querying, massive community support.

- Cons: Can get expensive at enterprise scale; closed source.

2. Qdrant: The Performance Workhorse

Written in Rust, Qdrant is known for its blistering speed and memory efficiency. It offers both a cloud-managed version and an open-source self-hosted option.

- Pros: Extremely fast, handles rich metadata filtering brilliantly, open-source core.

- Cons: Slightly steeper learning curve than Pinecone.

3. Azure AI Search (formerly Cognitive Search)

If you are building enterprise applications on the Microsoft stack, Azure AI Search is the heavyweight champion. It combines state-of-the-art vector search with traditional BM25 keyword search (hybrid search), which yields the highest relevance scores.

- Pros: Enterprise-grade security, native integration with Azure OpenAI and Semantic Kernel, excellent hybrid search capabilities.

- Cons: Complex to provision, enterprise pricing tiers.

4. PostgreSQL (with pgvector)

If you already have a massive PostgreSQL infrastructure, you don”t necessarily need a dedicated vector database. The pgvector extension allows you to store and query embeddings directly alongside your relational data.

- Pros: No new infrastructure to manage, ACID compliance, query vectors and relational data in the same SQL statement.

- Cons: Not as fast as purpose-built vector databases at a massive scale (100M+ vectors).

Implementing a Basic Vector Search

Here is a quick example of how you might initialize a Pinecone index and perform a search using Python and LangChain.

import os

from pinecone import Pinecone

from langchain_openai import OpenAIEmbeddings

from langchain_pinecone import PineconeVectorStore

# Initialize connection

pc = Pinecone(api_key=os.environ.get("PINECONE_API_KEY"))

index = pc.Index("enterprise-knowledge-base")

# Setup embeddings and vector store

embeddings = OpenAIEmbeddings(model="text-embedding-3-small")

vectorstore = PineconeVectorStore(index, embeddings, "text")

# Perform a semantic search

query = "What is our Q3 cloud infrastructure strategy?"

docs = vectorstore.similarity_search(query, k=3)

for doc in docs:

print(doc.page_content)Conclusion

Choosing the right vector database is critical for the success of your AI agents. If you want maximum developer velocity, start with Pinecone. If you need raw performance and self-hosting, look at Qdrant. If you are deeply embedded in the Microsoft ecosystem, Azure AI Search is unmatched. And if you want to keep your tech stack simple, just enable pgvector on your existing Postgres database.