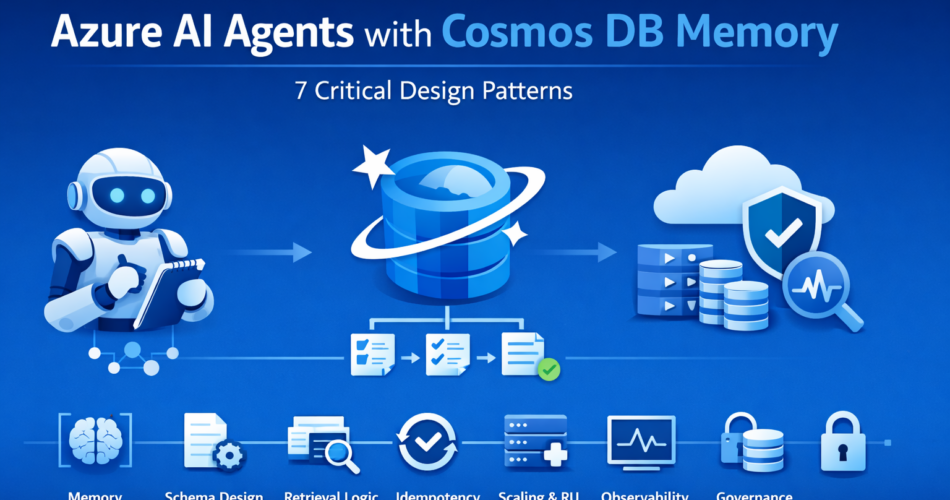

Azure AI agents with Cosmos DB memory become relevant the moment your system stops being a stateless chat interface and starts coordinating decisions across multiple steps. In early prototypes, memory often lives inside the prompt. The model “remembers” because you resend conversation history on every call. It works under light usage.

Then production traffic arrives.

Multiple workflows execute in parallel. Agents append to shared state. Retries duplicate entries. Context grows beyond token limits. Costs rise because every call includes the full past conversation. Nothing crashes, but latency drifts and audit trails become difficult to reconstruct.

Durable memory changes that equation. Instead of embedding history inside prompts, you persist structured state and retrieve only what is needed. That architectural shift introduces scaling, replay, and governance responsibilities — and those responsibilities determine whether the system remains stable.

Before diving into design patterns, one threshold question matters.

When Durable Memory Is Justified

Cosmos-backed memory is appropriate when:

- Workflows require replay and auditability

- Multiple agents coordinate using shared state

- Decisions depend on historical context

- Concurrency is high enough to expose race conditions

It is unnecessary when:

- Interactions are short-lived

- Context does not influence downstream actions

- Stateless responses are sufficient

Durable memory introduces operational overhead. It should solve a real coordination problem, not just formalize chat history.

1. Memory Boundary: Prompt Context vs Structured State

The first architectural decision is where memory lives.

Prompt-based memory

Conversation history is appended to each model call.

Structured memory in Cosmos DB

State is stored externally and selectively retrieved.

Prompt-based memory is simple but scales poorly. Token cost grows linearly with history size. Replay becomes non-deterministic because reasoning is regenerated from text, not state.

Structured memory separates:

- Ephemeral reasoning context

- Workflow state

- Immutable audit events

Tradeoff:

- Prompt memory minimizes infrastructure work.

- Structured memory requires schema design and partitioning discipline.

The advantage of explicit state is deterministic recovery. When a retry occurs, the system reloads structured data rather than reconstructing reasoning from unbounded text.

2. Schema Design: Modeling for Retrieval, Not Storage

Schema decisions directly shape retrieval cost and token usage. Storing large conversation blobs in a single document leads to:

- Large document growth

- Expensive RU consumption

- Slower reads

Instead, design memory as structured events or summaries.

Example document:

{

"workflow_id": "wf-2048",

"agent_id": "risk_agent",

"memory_type": "summary",

"content": "Customer risk score calculated at 0.72",

"step_id": "risk_step_3",

"timestamp": "2026-02-24T10:15:00Z"

}

Partition by workflow_id to localize read/write operations.

Three common patterns:

- Single growing document – simple, but RU cost increases as size grows.

- Append-only event log – scalable, but requires aggregation during reads.

- Hybrid (event log + rolling summary) – events are appended; periodic summaries compress history.

Schema should be designed around retrieval patterns. If agents typically need the last five events and a summary, optimize for that query path instead of storing everything in a single record.

3. Retrieval Strategy: Controlled Context Injection

Schema informs retrieval. Retrieval determines token cost.

A common failure pattern is retrieving full workflow history for every agent call. Instead, retrieve selectively.

Illustrative flow:

# Query recent memory

recent_items = query_cosmos(

workflow_id=workflow_id,

limit=5,

order_by="timestamp DESC"

)

# Retrieve workflow summary

summary = get_summary(workflow_id)

# Construct model context

context = build_prompt_context(summary, recent_items)

response = call_model(context)

This pattern:

- Limits token growth

- Controls RU consumption

- Keeps prompts focused

Tradeoff:

- Requires explicit query logic

- Adds application-layer responsibility

Advantage:

- Predictable scaling behavior

Durable memory works only when retrieval is deliberate. Full-history injection is equivalent to prompt-only memory with extra infrastructure.

4. Replay Safety and Idempotency

Retries are inevitable under distributed execution. Without safeguards, retries duplicate memory entries.

Mitigation pattern:

- Include a

step_idin each memory record - Perform conditional writes

- Verify existence before append

Example logic:

if not memory_exists(workflow_id, step_id):

write_memory_entry(workflow_id, step_id, content)

Tradeoff:

- Extra read-before-write operation

- Slight RU increase

Advantage:

- Prevents duplicate state

- Maintains audit integrity

Replay safety ensures retries correct transient failure instead of amplifying side effects.

5. Scaling Alignment: RU, Concurrency, and Throughput

Cosmos DB scaling is governed by Request Units (RU/s). Memory growth and concurrency must align with RU provisioning.

Key scaling surfaces:

- Write frequency per workflow

- Concurrent workflow count

- Memory summarization operations

- Cross-partition queries

Before production, simulate load:

- Increase concurrent workflows gradually

- Monitor RU consumption

- Measure query latency

- Track memory document size

Right-sizing principles:

- Partition by workflow_id

- Avoid cross-partition queries

- Disable unnecessary indexing on large fields

- Consider autoscale for unpredictable traffic

Tradeoff:

- Higher baseline RU allocation

- Increased infrastructure planning

Advantage:

- Stable latency under concurrency

Misaligned scaling often leads to throttling, which triggers retries and increases token usage.

6. Observability: Detecting Memory Drift

Memory bloat does not produce immediate failures. It increases cost and latency gradually.

Monitor:

- Document size growth

- RU consumption per workflow

- Retrieval latency

- Token usage correlated to memory size

Example telemetry emission:

logger.info(

"memory_write",

extra={

"workflow_id": workflow_id,

"ru_consumed": ru_cost,

"document_size": size

}

)

With proper monitoring, you can detect:

- Excessive write amplification

- Summarization gaps

- Unexpected growth patterns

Tradeoff:

- Additional telemetry volume

Advantage:

- Early identification of scaling inefficiencies

Observability transforms memory from opaque storage into an operationally visible subsystem.

7. Governance and Retention Strategy

Durable memory introduces compliance considerations.

Design decisions include:

- Retention duration

- Data residency

- PII storage policy

- Deletion workflows

Options:

- Store full conversation history

- Store structured extracted facts only

Full history improves explainability but increases compliance risk. Structured summaries reduce exposure but may limit reconstruction fidelity.

Retention policies should be enforced automatically, not manually reviewed.

Memory architecture must align with governance requirements from the start. Retrofitting compliance after data accumulation is expensive and disruptive.

Final Thoughts

Azure AI agents with Cosmos DB memory provide explicit, durable state across workflows. They enable replay safety, shared coordination, and auditability.

They also introduce:

- RU cost management

- Schema discipline

- Retrieval engineering

- Governance enforcement

The core tradeoff is implicit context versus explicit state.

Implicit memory inside prompts is simple but unpredictable at scale. Structured memory is operationally heavier but enables controlled growth and deterministic recovery.

Design memory around retrieval patterns. Persist only what you can govern. Monitor growth continuously.

Durable state is not about storing more data. It is about controlling behavior as systems scale.