Using uv with Docker multi-stage builds wasn’t something I originally planned to do.

I need to admit something. For years, my Python Docker images were an absolute mess. They worked, but every time I ran docker images and saw the file sizes, I felt a little sick.

Gigabyte-sized images. Slow CI pipelines that took just long enough to break my flow. Dependency issues that appeared only in production because my local environment never quite matched CI. I kept telling myself: “This is fine. Everyone’s Python Docker images are heavy.”

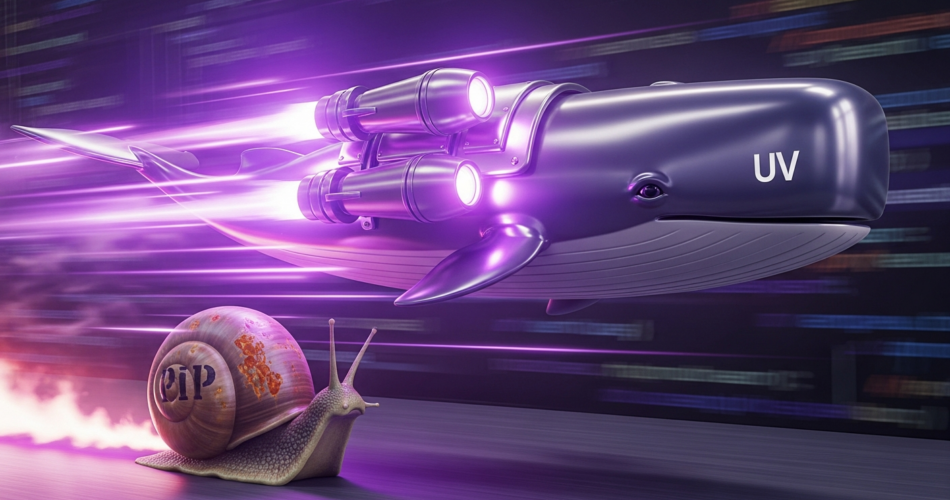

That lie worked-until container registry costs started climbing and deployments stretched past ten minutes. This is the story of how using uv with Docker multi-stage builds completely changed how I build Python containers, and why I finally stopped dreading docker build.

The “Good Enough” Trap I Fell Into

I wasn’t careless. I was pragmatic. My setup looked like what most Python teams were doing a few years ago:

pipfor dependency installation.- Single-stage Docker builds.

- Virtual environments inside containers.

- Layers piling up over time.

It was good enough when the project was small and CI ran once a day. But at scale, “good enough” became fragile. My Docker image crossed 1.2 GB, and dependency installation alone took 3-4 minutes per build. I wasn’t building containers anymore. I was maintaining a house of cards.

The Accidental Discovery of uv

I didn’t find uv while searching for Docker tools. I found it because I was frustrated.

Frustrated with pip resolving the same dependency graph again and again. Frustrated with CI jobs timing out because one mirror was slow. Frustrated with dependency conflicts being discovered far too late.

Then I saw a simple claim: “A fast Python package manager written in Rust.” I didn’t trust it. But I tried it locally, and the speed wasn’t incremental-it was obvious.

Why Multi-Stage Builds Finally Made Sense

I had known about Docker multi-stage builds long before this. I avoided them. They felt like an optimization for people with too much time on their hands. My thinking was simple: if the container runs, why complicate the Dockerfile?

But pairing multi-stage builds with uv changed how I thought about containers entirely. Multi-stage builds are not about clever Docker tricks. They are about boundaries.

The moment I separated those responsibilities, the Dockerfile became easier to reason about, not harder.

Here is roughly what I used to run. Notice how the build tools stay forever, and there is no separation between build and runtime.

FROM python:3.12-slim

WORKDIR /app

RUN pip install --upgrade pip

COPY requirements.txt .

RUN pip install -r requirements.txt

COPY . .

CMD ["python", "main.py"]This is the modern approach leveraging Astral’s official best practices. We use a builder stage to resolve and install dependencies into a pristine virtual environment (.venv), and then copy only that isolated environment into the final runtime image.

# Stage 1: Builder

FROM python:3.12-slim AS builder

# Copy uv as a single static binary

COPY --from=ghcr.io/astral-sh/uv:latest /uv /uvx /bin/

# Environment variables for uv

ENV UV_COMPILE_BYTECODE=1 \

UV_LINK_MODE=copy

WORKDIR /app

# Copy dependency files early to leverage layer caching

COPY pyproject.toml uv.lock ./

# Install dependencies into a new .venv without the project itself

RUN --mount=type=cache,target=/root/.cache/uv \

uv sync --frozen --no-install-project --no-dev

# Copy application code and install the project

COPY . .

RUN --mount=type=cache,target=/root/.cache/uv \

uv sync --frozen --no-dev

# Stage 2: Runtime (Slim & Clean)

FROM python:3.12-slim

WORKDIR /app

# Copy only the virtual environment and the application code

COPY --from=builder /app /app

# Put the virtual environment on the PATH

ENV PATH="/app/.venv/bin:$PATH"

CMD ["python", "main.py"]Why The New Setup is a Masterpiece

The new uv sync setup gets three critical things right:

- Zero build tooling in production: The

uvbinary is only present in the builder stage. The final runtime container has absolutely no knowledge of how packages were resolved. It just executes Python. - Bytecode Compilation: By setting

UV_COMPILE_BYTECODE=1, Python compiles the packages to.pycfiles during the build process, shaving off critical milliseconds during container startup. - Layer Caching Mastery: We use Docker’s

--mount=type=cacheto speed up subsequent builds drastically.

Real Results (No Marketing Numbers)

I didn’t flip the switch overnight. I ran both builds side by side in my CI pipeline. The uv-based build consistently finished before pip had even completed downloading the wheels.

| Metric | Old Setup (pip) | New Setup (uv sync + Multi-Stage) |

|---|---|---|

| Image Size | ~1.2 GB | ~240 MB |

| Dependency Install | ~3-4 min | ~25 sec |

| CI Build Time | ~7 min | ~2 min |

| Build Reliability | Inconsistent | Predictable |

This wasn’t an optimization. It was a complete reset.

What This Changed in My Day-to-Day Work

The biggest improvement wasn’t the image size or the CI speed. It was confidence.

I stopped worrying about whether my local environment matched production. I stopped second-guessing dependency upgrades. I stopped treating Dockerfiles as fragile artifacts no one wanted to touch. Rebuilding images became cheap, predictable, and safe. That changed how often I refactor and how confidently I ship.

Final Thoughts

uv didn’t save my Docker images by itself. The combination did. uv gave me fast, deterministic installs, while Docker multi-stage builds provided clean separation.

Together, they forced me to treat containers like production artifacts-not temporary shells. I stopped fighting Docker. I stopped babysitting CI. And for the first time in years, my Python containers felt boring again.

That’s the highest compliment I can give.

Related Reading: Enhance your setup further by reading our guide on Poetry Monorepo Structure Best Practices.